Toni_

Well-known member

Hi again,

Here's some replies to some earlier comments:

---

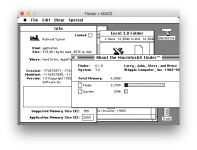

In other news, here's some latest advancement in the development - we can now run PC emulator inside M.A.C.E., so basically the emulator can emulate emulators

https://mace.software/2019/11/27/softpc-and-the-quest-for-the-xt-memory-map/

Sorry for the slow development speed, real-life work is slowing down us at the moment, but we're making progress every week. The goal is still to reach some sort of generic release of Phase 1 emulator (in addition to the bundled apps), but there will be a bunch of unfinished Phase 1 stuff still in it, which may limit application compatibility. I think the next target is to implement "Shared" filesystem module for M.A.C.E., which would allow cross-process - and also native HFS+ - file access. Just want to make everything perfect, so you guys won't be disappointed because of the certain bugs and certain unimplemented features that are still in progress.

Here's some replies to some earlier comments:

I think the basic idea how Apple did it Mac OS 9 nanokernel, was abstracting the lower-level hardware through the nanokernel interface, running Mac OS 9 on top of it as a single task (and thus normal applications runnning on it would not directly benefit of the multitasking and memory protection features). I read that this abstraction was one of the key elements running the actual Mac OS 9 into Classic environment, where the low-level nanokernel was replaced with the Classic runtime, allowing most of the Mac OS 9 running on top of it to remain intact. There was some discussion here: https://groups.google.com/forum/#!msg/comp.sys.mac.programmer.help/tO0iuTNETGc/oTwfHPfuqXoJThis is why I was thinking of the nanoKernel. Apple had a solution for running older cooperative code along side preemptive memory-protected code (I don't understand the details of how they did that but my understanding is that the nanoKernel was the key that made that happen.) Rather than using the host OS to do the program-to-program communication, reproduce the parts (nanoKernel?) that Apple made to do that and keep it inside the emulation environment.

Is that a reasonable idea?

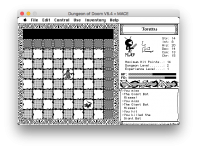

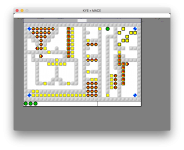

In the old days the hardware limitations kind of forced people to create performance- and space-efficient code, which with current hardware is just blazingly fast and small. However, that comes with the downside, that a lot of the old System software (Toolbox APIs, etc), and many applications, bypass a lot of sanity checks, and tolerate a lot of even buggy behaviour without immediately crashing the system or having side effects. One example was "BattleCruiser" game which I was trying recently, which does DisposeHandle on a resource handle, which does not surface as bug until the resource handle in resource map is accessed next time (in that case, doing ExitToShell -> CloseResFile). Another example is actually from one of my very early shareware games, aWorm, which I noticed does not set a valid GrafPort using SetPort before doing CopyBits on the window. This by luck happens to work most of the time, but if that port is set to the screen port, it will crash in attempt to use the non-window GrafPort as WindowPeek. Good catch 21 years after after making that game...Yes, it is! I think there is a community-wide yearning for a smaller simpler system that's both performant on a range of hardware AND doesn't corral us in to Walled Gardens !! 6 months ago I was doing some research into SK8 (Hypercard on steroids) and I was BLOWN AWAY on how FAST emulating MacOS 7.6.1 on 68K was !!! It blew my mind! The ONLY reason I'm not primarily running classic MacOS today is that the emulators are not reliable.

Something akin to this would be truly a dream OS. One of the motivations for writing the boot loader I mentioned in one post earlier. However, have to see how soon we will get there... now that Apple is, sadly, making it more difficult to publish independent software even on MacOS, there is growing motivation for having possibility to host the emulation on also other systems than Apple's own.This "dream" is certainly the core of my earlier post!

Perhaps diverging off topic but maybe "the dream" needs to be defined.

1) A reliable emulator for running existing software

(The M.A.C.E. project is VERY exciting! I'm actually considering using Hypercard for REAL projects again once it is up and running

2) Independent Mac OS 7/9 operating system. capable of running original software (FULL compatibility not required). NanoKernel included to provide a preemptive, memory protected space for "new" community created applications.

3) Poor man's Copland. Simplified versions of "some" of the Copland features. Document-Centric computing would be my #1 vote! OpenDoc compatibility NOT required.

Like I said, it's the "dream".

What do you guys think? Does it match your thoughts?

It is true that Apple Events can be easily abstracted. However, they are not the only means of sharing information between Applications. There's also the PPC Toolbox, Process Manager APIs, and as Mac has linear memory with no memory protection, any process could share information with other process by simply finding a way to pass valid Pointers/Handles to other application's zone, which there is no way to prevent on Mac OS. There may be also other cases, I haven't looked for example much into CFM shared library mechanism, but I'm sure those will come up eventually.Cross application communication could be done easily using Apple Events.

Each application could run in its own Toolbox environment.

It seems like Elliot Nunn is disassembling the Classic ROM.

Actually the header does have. But true, technically this is not a big challenge to recalculate it.The MacBinary format does not have a CRC checksum.

The file operations always operate on data on disk, as a 1:1 mapping to Mac OS Toolbox APIs. So when for example Mac application does _SetEOF call, we only do ftruncate call on the native side (which appropriate error checking, etc). I think the feature you are referring to might be disk caching, which caches file contents in RAM. All this however is delegated to the host system, because handling additional RAM cache in emulator would be unnecessary and complex work - and might even risk adding data corruption, for example if emulator crashes before data is flushed on to the disk. The aim is not to reduce data size, but to reduce complexity of operations and thus reliability.This explanation does not make sense. Do you not keep both data and resource fork in RAM? MacBinary is 3 parts: 128-byte header, followed by data fork, followed by resource fork. Surely writing these 3 parts in one combined operation is no worse than writing 2 separate files to disk. You're not reducing the size of data written to disk by using AppleDouble instead of MacBinary.

---

In other news, here's some latest advancement in the development - we can now run PC emulator inside M.A.C.E., so basically the emulator can emulate emulators

https://mace.software/2019/11/27/softpc-and-the-quest-for-the-xt-memory-map/

Sorry for the slow development speed, real-life work is slowing down us at the moment, but we're making progress every week. The goal is still to reach some sort of generic release of Phase 1 emulator (in addition to the bundled apps), but there will be a bunch of unfinished Phase 1 stuff still in it, which may limit application compatibility. I think the next target is to implement "Shared" filesystem module for M.A.C.E., which would allow cross-process - and also native HFS+ - file access. Just want to make everything perfect, so you guys won't be disappointed because of the certain bugs and certain unimplemented features that are still in progress.