Hello

@Xero, I just wanted to thank you for this software, no matter how you did it, as I don't care. I have long dreamed of a tool that is easy to install and replicates the navigation and file manipulation commands of Unix/Linux in Mac OS Classic, in addition to having networking capabilities.

I'm sorry about the reception you're getting here, it's frankly ridiculous and impolite to say the least.

It makes me wonder if this place is even moderated by anyone. The release of new software for Mac OS Classic is quite a rare thing. I hope this doesn't discourage you from continuing development.

Cheers.

Thank you - I appreciate the support. I felt the same way leading up to this! I want to actually use my old macs to do modern tasks, and if AI can help me achieve this faster, why not use the tools I have available to do so?

And to Rodin, concerning his statues:

"Without the chisel wouldn't have been able to do this unless you actually sat down and learned how marble is removed.

This is not yours. It's the chisel's"

Curious; in your opinion, does refactoring in IDE also count as "not yours"? How about autocompletion? How about using intangible charges in invisible silicon structures rather than punching holes in a paper tape? That was *real*!!!

Anyway - AI is a tool. It maybe a crappy tool in many cases (my retrocomputing attempts seem to be too much out of AI's comfort zone, or I'm too old to learn new tricks with AI), but it some people can use that tool to be more productive, then more power to them. Of the many areas that AI has invaded, adding features to a retrocomputing SSH seems to be a harmless one.

My opinion is that the level of disclosure of the OP was quite sufficient, and if the resulting code work and is abiding by the original license of ssheven, then it's great to have a new useful piece of software. It also makes me wonder if AI could be used to add acceleration support to the codebase - I do have a few boards with FPGA that would make the experience of SSH on '030 and '040 faster...

There's so much irony with all this, considering I would have been equally hating on it all a year or so ago, too. I likewise was thinking about making a similar comparison to IDEs - it's like back in the day when "use notepad or bust" was almost a flex. Like, syntax highlighting is too good for them, lmfao. Or, "frontpage 98/2000? naw I use notepad" lmfao. These were all real sentiments of the past that I remember very well.

Indeed, even to some of my friends that don't like AI, they thought what I've been doing with my retro projects, like coding for IE5 mac, and/or this project, are almost like, anti-projects or "forbidden" projects, because I'm not here looking for venture capital, or profit, or putting on some kind of show about AI. I'm doing it to bring new life to old things, not tout idealism about the future or what not. If anything, it's the haters who have been putting on quite the show for us...

What about libraries? I rarely program from scratch

Indeed. Ironically there's even some odd parallels to music there as well, like, hey, come up with a totally original chord or scale. I have more mixed feelings about the AI music/art stuff, though, I think AI mastering is mostly harmless.

Might be worth a collaboration with the OP -

@Xero - after you previously QuickDraw patch, would you consider helping

@Melkhior add more extensive graphics acceleration to his FPGA based graphics hardware?

It would be interesting to see if it could work on optimisation of performance improvement under specific load cases vs effort / real estate in the FPGA?

Be interesting - for test cases you could define the full range of inputs and then get it to bulk test the non-optimised behaviour Vs the optimised behaviour to go some way towards validating it.

I'm totally down to try and help! I think I saw some of the projects trying to create new FPGA cards for these old machines, but they seemed a bit unobtainable. If I could help make this stuff more accessible, I'm all for it.

AI is the antithesis to our hobby, and it drives genuine people with the spirit of curiosity who wish to create and collaborate away.

The original author of this software does not desire this. So you should feel bad.

It's unfortunate he feels that way. I actually was commenting on his github issues and even submitted a work-around, a little under a year ago now, but the project seemed to have stalled out. And, FWIW, that was all before I was using AI. I think I stated in my original post how there was some bugs I'd found and they hadn't gotten fixed. I didn't start this project thinking I was competing with anything under active development. I would have reached out and tried to collaborate more, had I seen evidence of such. However, if their own attitude towards AI is so bleak, we likely wouldn't have seen eye-to-eye anyway. If he's worried about AI replacing him at his job as stated in the blog post, that should be seen as a sign to get ahead of the curve. Frankly, I'd suggest the same to any of you. I don't think people shouldn't look at it that way, though. It helps you do your job faster. It only takes your job if you let everyone else get ahead of you. That was never not the case in this industry, though. Things have always been like that.

I think there's also some misrepresentations in his post. I did a commit to make the naming of files more generic, but it still literally says "Based on ssheven by cy384" on the splash screen and throughout every file. A simple grep is proof enough, there's well over 20 references to his name & the original project name appears at least 14 times in various notices/comments/etc. I consider it best practice not to hard code stuff like that in file names, especially since it's no longer *just* an ssh client, it just didn't make sense. It was never intended to remove credit where credit's due, and his name & credit is still very much all over the project, literally in the splash screen, I don't have any plans to change that.

However, I can also understand it's a weird feeling when someone forks your project and imparts their stylistic choices on it. I had similar feelings when one of my home automation projects got forked many years ago. It wasn't the greatest feeling, even though I'm all about open source. I don't want him to feel like this project is a slight towards him, and I empathize with his sentiments, despite not agreeing with everything in his post.

Had that project not existed, I would have just started from scratch, it might have taken more time up front, but we'd likely still be here regardless. Should I feel bad? I tried to help. It'd been nearly a year with no updates. I definitely won't be guilt-tripped because I decided to do something about it. Life moves too fast to sit still. AI just makes it move faster, and I don't see that slowing down just because some people are angry on a forum about it. The world would have stopped a long time ago if that were the case every time that happened!

For QD acceleration, at the moment real estate cost would be fairly low. The *FPGA uses a RISC-V core and some firmware to implement acceleration, not custom hardware - much less area-efficient but easier to implement. The only cost would be additional registers where the Mac can put parameters (effectively,

a control structure but in hardware, accel_le is a pointer to the area in the FPGA with those registers) if more are needed, and storage of the firmware - which could be moved away from inside the FPGA to the Flash were the declaration ROM lives.

The primary problem is that the high-level traps are complex to implement, and the simpler low-level traps (like the BitBlit trap my code is accelerating) are undocumented (and they peek and poke in memory, including the stack). If someone could (with or without AI) figure out how to substitute more of those traps by clean C code in an INIT, it would be fairly easy to then offload the C code on the RISC-V core in the FPGA.[/url]

Hmm, this definitely sounds like a lot of the same kinda stuff that I was touching with desktopfix, I'd be curious to see where it could go. Heck, AI is even more than happy to disassemble 68k and do assembler patches for me. If there's a clear enough path, and a good way to debug, I suspect I could help!

@Xero, just wanted to point out that if you want to handle the Unicode conversion, you may want to look at how The Unarchiver does it -- it contains a nice heuristic converter that automatically takes Unicode and converts to MacRoman. I've found the logic used there useful in a number of scripts I've written that take Unicode text and filter it for a MacRoman environment.

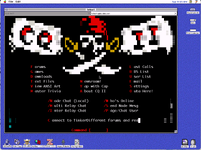

Ahh, wish i'd seen that before! I actually mostly have this done as of last night! It's a two-fold approach, for anything with close-enough MacRoman characters, it does similar to what you said - maps them to the equivalents. However, for doing crazy stuff like this (

Byte Knight's BBS splash screen), it needed more font glyphs. Now there's a python script which generates custom glyphs in all the font sizes used, that gets embedded as a new font in the resource fork.

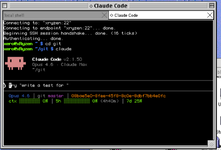

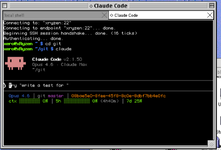

Also, claude now looks a lot better within it as well! Dual monitor quadra 700 w/claude sessions, vibing with a vibe. Haha! Admittedly it's a lot faster from QEMU than the real quadra, but I'm hoping to do some more speed optimizations. Also, xterm-256color support! All mostly working!

Right now I'm having codex and claude go back and forth doing code review, I'm hoping to get all that wrapped up before I do the next release, but, should be soon!