I seem to have missed the part where I'm looking for the 040 PDS connector. I think I just asked earlier if anyone knew the part number or where to find it. I didn't mean that I wanted to take up the project. Given enough time, I'll probably look into it, but given enough time, the Sun will turn into a Red Giant....

-

Updated 2023-07-12: Hello, Guest! Welcome back, and be sure to check out this follow-up post about our outage a week or so ago.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Development of Nubus graphics card outputting to HDMI?

- Thread starter dlv

- Start date

Trash80toHP_Mini

NIGHT STALKER

I brought the NTC, design example, its documentation and the PAL listings to your attention, that's when you bit my head off!

I'm not suggesting that either by the way, I've not been clear. We covered that on the previous page when you answered my question: "Wondering here if more of the NuBus interface might be handled on the FPGA given possible reductions above?" In that light I've been trying (and failing) to express my thoughts in terms of a block diagram and the "NuBus chipset" as one block of logic within the FPGA or as part of logic would need to be added when the time comes to interface with the SRAM

trag covered PDS interface requirements already and better expressed the performance issues:

I'm very familiar with NuBus and PDS in terms of Card and Driver Development AND I understand the pincount thing. Thought I'd prefaced my PDS suggestion with the proviso that it would be better IF the extra I/O lines of PDS could be supported, but couldn't find it.

When Apple introduced and documented the brand spanking new 68030 PDS interface (new as in previous SE/30 release) in the expanded 1990 edition of the DCaD series they made the point over and over that PDS cards must be developed as PseudoSlot "NuBus" implementations. Everything must be kept exactly the same in addressing the PDS as if it were addressing the intervening (and remarkably slower) mutliplexed NuBus interface. I haven't been clear enough. It is at that point that PDS diverges from the already in place NuBus model (and has since Mac II and SE were released) and goes straight to the CPU I/O bus. Electrically it may be very different, but l've been saying the logically it's the same at that point.

NuBus saves but a bit less than 32 lines over a PDS implementation and when introduced in the SE/30 at 16MHz could be likened to running on the wet sand at the shoreline as compared to NuBus at 10MHz running in the dry sand. PDS transitioned to 25MHz in a new and oddball Application-Specific Expansion Interface Cache implementation in the IIci and then the 40MHz true but also oddball PDS implementation in the IIfx the comparison became more like the IIfx' PDS running on the wet sand, the 16MHz SE/30 PDS running on the dry sand and the IIci somewhere in between. At that point the NuBus interface was running through the surf by comparison.

As trag said there will be no meaningful improvement in performance of a current NuBus design over designs of yore. We're not even talking about QuickDraw acceleration at this point. The performance advantages of implementing the the simple frame buffer we are talking about would be the 68k equivalent of the HPV card of the NuBus PPC era. Toby would likely outperform most NuBus cards when pulled out of the restrictions of the NuBus performance envelope and placed on the PDS. With its dedicated dual-ported video memory, the Macintosh II Video Card (Toby) would handily outperform the IIci's system DRAM based built in video. That components would need to be upgraded for its 25MHz bus would be a given. I've proposed developing a Toby based PDS video card in the past for exactly those reasons. That's where the notion of pulling components from it in a literal sense was a real suggestion, doing so in a figurative sense was what I was trying to describe earlier in this current chapter of the topic.

You said earlier that I might need to draw a picture of what I've been trying to get across to you and I think it's time to do exactly that for the next chapter. Words so often, if not always fail me. Bunsen suggested much the same years back and said doing it helped immensely.

I'm sure they've failed me again in this post, but the back and forth has shaken loose another crazy notion that I'll not bring up in this thread.

I'm not suggesting that either by the way, I've not been clear. We covered that on the previous page when you answered my question: "Wondering here if more of the NuBus interface might be handled on the FPGA given possible reductions above?" In that light I've been trying (and failing) to express my thoughts in terms of a block diagram and the "NuBus chipset" as one block of logic within the FPGA or as part of logic would need to be added when the time comes to interface with the SRAM

Understood, actually I never suggested it was. I've only been talking about the implementation of that logic, it's been problematic for engineers to do in the past and may be so at present.I repeat: electrically at least I see no reason why NuBus is harder than PDS.

Very familiar with it, matter of fact I suggested using NuBus in 16bit mode if a further reduction in pincount might be necessary in the short term. That could be the case for implementing the single bit first run at this project that you've just proposed.Remember, almost half the pins on a 96 pin NuBus connector are power or ground. It takes almost 90 pins to do a 32 bit PDS card. Check it yourself.

trag covered PDS interface requirements already and better expressed the performance issues:

I'm very familiar with NuBus and PDS in terms of Card and Driver Development AND I understand the pincount thing. Thought I'd prefaced my PDS suggestion with the proviso that it would be better IF the extra I/O lines of PDS could be supported, but couldn't find it.

When Apple introduced and documented the brand spanking new 68030 PDS interface (new as in previous SE/30 release) in the expanded 1990 edition of the DCaD series they made the point over and over that PDS cards must be developed as PseudoSlot "NuBus" implementations. Everything must be kept exactly the same in addressing the PDS as if it were addressing the intervening (and remarkably slower) mutliplexed NuBus interface. I haven't been clear enough. It is at that point that PDS diverges from the already in place NuBus model (and has since Mac II and SE were released) and goes straight to the CPU I/O bus. Electrically it may be very different, but l've been saying the logically it's the same at that point.

NuBus saves but a bit less than 32 lines over a PDS implementation and when introduced in the SE/30 at 16MHz could be likened to running on the wet sand at the shoreline as compared to NuBus at 10MHz running in the dry sand. PDS transitioned to 25MHz in a new and oddball Application-Specific Expansion Interface Cache implementation in the IIci and then the 40MHz true but also oddball PDS implementation in the IIfx the comparison became more like the IIfx' PDS running on the wet sand, the 16MHz SE/30 PDS running on the dry sand and the IIci somewhere in between. At that point the NuBus interface was running through the surf by comparison.

As trag said there will be no meaningful improvement in performance of a current NuBus design over designs of yore. We're not even talking about QuickDraw acceleration at this point. The performance advantages of implementing the the simple frame buffer we are talking about would be the 68k equivalent of the HPV card of the NuBus PPC era. Toby would likely outperform most NuBus cards when pulled out of the restrictions of the NuBus performance envelope and placed on the PDS. With its dedicated dual-ported video memory, the Macintosh II Video Card (Toby) would handily outperform the IIci's system DRAM based built in video. That components would need to be upgraded for its 25MHz bus would be a given. I've proposed developing a Toby based PDS video card in the past for exactly those reasons. That's where the notion of pulling components from it in a literal sense was a real suggestion, doing so in a figurative sense was what I was trying to describe earlier in this current chapter of the topic.

You said earlier that I might need to draw a picture of what I've been trying to get across to you and I think it's time to do exactly that for the next chapter. Words so often, if not always fail me. Bunsen suggested much the same years back and said doing it helped immensely.

I'm sure they've failed me again in this post, but the back and forth has shaken loose another crazy notion that I'll not bring up in this thread.

Last edited by a moderator:

Trash80toHP_Mini

NIGHT STALKER

Speak of the devil! Actually, I was in the process of quoting you when you posted and so had yet to speak aloud of you at that point.

:lol: I hear that bit about time! I misunderstood your request and provided pics of the connectors with a couple of different designations printed on them. IIRC you'd made the same request several times in threads past, that's why I jumped in with the pic. That was tangential at the time, but it got me to thinking about that oddball connector interface in a certain Quadra 950.I seem to have missed the part where I'm looking for the 040 PDS connector. I think I just asked earlier if anyone knew the part number or where to find it. I didn't mean that I wanted to take up the project. Given enough time, I'll probably look into it, but given enough time, the Sun will turn into a Red Giant....

But why do you think keeping that the block that's labeled "Video" has a "PDS-like" interface on it? It doesn't, or at least it doesn't unless there's a lot of design decisions made that were thrown out but later discarded. Per whit:In that light I've been trying (and failing) to express my thoughts in terms of a block diagram and the "NuBus chipset" as one block of logic within the FPGA or as part of logic would need to be added when the time comes to interface with the SRAM

Way back in the thread I *did* propose the idea of breaking this across two FPGAs because of the worries about whether it was possible to get enough I/O pins to do everything (the bus interface, the RAM interface, and the video port) with one reasonably-inexpensive and accessible FPGA module. My idea for doing that was essentially having the bus live in one place (probably a different piece of programmable logic), the video in the FPGA, and have them both interface to a chunk of RAM in between them. (Then in one case you only need enough pins for bus glue + RAM interface in one device and RAM interface + HDMI in the other.) In *that* case then, sure, I guess there's some argument to be made that the RAM interface you have hanging off the video component is... kind of PDS like, kind of, remotely, but not really? But I've been looking at how that Amiga card that started this whole thing off is put together, and it looks to me like there are *plenty* of pins to implement Nubus; their implementation of "68000 PDS" requires 64 pins, which is almost 16 more than we need for Nubus. Full width 68030 is going to be another 24 pins if we assume all the other signals other than address and data are also present and needed. So that's why I estimated 90. That is flatly a dealbreaker for the FPGA module they used in the design referenced by the OP, it has 64 digital I/O pins. 64.

The FPGA itself has more than 64 pins, but they're already soldered to the SDRAM/HDMI port/etc that are built into that card. And the FPGA itself that has enough pins to do this is a BGA, which means it's pretty flatly a no-go for homebrew. That whole section about breaking it up was a grasp at "well, how could we do this with FPGAs that are available in solder-able packages" instead of modules.

Frankly I think I've pretty much convinced myself there's a real possibility of essentially using the Amiga-designed card hardware design nearly unmodified(*1) beyond putting a Nubus connector on it instead of Zorro II and re-writing the bus module to speak Nubus instead of 68000. The design might *also* work nearly unmodified for a 16 bit LC PDS, that is also true, and *that* may well be less work because you could probably reuse a lot of the 68000 bus code, but I'm not entirely sure about that because there's some details of bus decoding with PDS slots I'm not entirely sure about, IE, whether you actually need to decode all 32 address lines when you're using the "psuedoslot" assignment or not.(*2) But a "direct refit" won't scale to 32 bit PDS. Period. *Maybe* you can make it fit by adding a little external logic to handle the bus sizing signals and only using a 68030 PDS as a 16 bit slot, but this is not an option for 68040 PDS.

If we throw out at least the physical design of the Amiga video card this all started with *maybe* a 32 bit PDS version will all fit with that Spartan6 dev board that was mentioned a few pages ago, as it says it has a 108 pins? (But also doesn't have the HDMI ports already implemented.) Assuming all 108 quoted are actually *usable* (sometimes on boards like this I/Os are only usable if you're not using some of the hardware built into the motherboard) with the DRAM enabled then... maybe. It'll be tight.

(*1 with the proviso that the video module will need to be redesigned so it used Mac-compatible pixel packing.)

(*2: Here is what is says in "Designing Cards and Drivers, 3rd edition, about PDS slots:

This sounds to me like you need to care about at *least* 24 address lines.... no actually. Maybe I'm wrong but this:[SIZE=11pt]To [/SIZE][SIZE=12pt]ensure compatibility with future hardware and [/SIZE][SIZE=11pt]software, you [/SIZE][SIZE=12pt]should decode [/SIZE][SIZE=11pt]all [/SIZE][SIZE=12pt]the address bits to [/SIZE][SIZE=11pt]minimize [/SIZE][SIZE=12pt]the chance of address [/SIZE][SIZE=11pt]conflicts. To [/SIZE][SIZE=12pt]ensure that the [/SIZE][SIZE=11pt]Slot Manager [/SIZE][SIZE=12pt]recognizes your [/SIZE][SIZE=11pt]card, make [/SIZE][SIZE=12pt]sure that the declaration [/SIZE][SIZE=11pt]ROM [/SIZE][SIZE=12pt]resides at the upper address [/SIZE][SIZE=11pt]limit [/SIZE][SIZE=12pt]of the [/SIZE][SIZE=11pt]16 [/SIZE][SIZE=10pt]MB [/SIZE][SIZE=12pt]address space. [/SIZE]

This makes it sound like you need to worry about the whole enchilada. Now, it certainly would be possible to slap some additional logic outside of the FPGA to sit on these address lines and do the necessary decoding, they don't *have* to terminate on the FPGA, but that means at the very least PDS will need more parts unless you find an even bigger FPGA module.)[SIZE=11pt]Notice [/SIZE][SIZE=12pt]that [/SIZE][SIZE=11pt]the /NUBUS signal (Table 15-7) is an address decode of the memory range $60000000 [/SIZE][SIZE=12pt]through [/SIZE][SIZE=11pt]$FFFF [/SIZE][SIZE=10pt]FFFF. [/SIZE][SIZE=11pt]The [/SIZE][SIZE=12pt]/ [/SIZE][SIZE=10pt]AS [/SIZE][SIZE=12pt](address strobe) [/SIZE][SIZE=11pt]signal qualifies the assertion of the /NUBUS signal. The /NUBUS signal is asserted [/SIZE][SIZE=12pt]a [/SIZE][SIZE=11pt]maximum of 26 ns after the [/SIZE][SIZE=12pt]/[/SIZE][SIZE=10pt]AS [/SIZE][SIZE=11pt]signal is asserted, [/SIZE][SIZE=12pt]and [/SIZE][SIZE=11pt]is removed [/SIZE][SIZE=12pt]a [/SIZE][SIZE=11pt]maximum of 22 ns after the [/SIZE][SIZE=12pt]/[/SIZE][SIZE=10pt]AS [/SIZE][SIZE=11pt]signal is removed. [/SIZE]

[SIZE=11pt]Remember [/SIZE][SIZE=12pt]that [/SIZE][SIZE=11pt]/NUBUS is valid when the [/SIZE][SIZE=12pt]processor [/SIZE][SIZE=11pt]is accessing the [/SIZE][SIZE=12pt]on-board [/SIZE][SIZE=11pt]video logic; [/SIZE][SIZE=12pt]therefore, [/SIZE][SIZE=11pt]to avoid [/SIZE][SIZE=12pt]possible [/SIZE][SIZE=11pt]data bus conflicts, you must [/SIZE][SIZE=12pt]decode [/SIZE][SIZE=11pt]one of the [/SIZE][SIZE=12pt]pseudoslot [/SIZE][SIZE=11pt]address ranges [/SIZE][SIZE=12pt]when [/SIZE][SIZE=11pt]using the /NUBUS signal as [/SIZE][SIZE=12pt]a [/SIZE][SIZE=11pt]qualifier. [/SIZE]

Are you? You didn't seem aware of things like there actually isn't such a thing as "16 bit mode" for Nubus, which is something that comes to light pretty quickly when you read the manuals. (Yes, there's the "bus lane" thing, but that doesn't apply to any "RAM-like" device like a video card *except* for the declaration ROM. They're *very* clear about that.) I get that you have it really drilled into your head that the two kinds of cards "look alike" if you use the "Psuedoslot" methodology, and, sure, that's great when you're talking about what you're sticking on the declaration ROM, but that cart is so far in front of this horse it's not even funny.I'm very familiar with NuBus and PDS in terms of Card and Driver Development

(Well, okay, there's another place where byte lanes come into play, the whole "endian-ness" thing which is kind of a pain in the neck, or at least is is when you don't have upteen gazillion gates inside of an FPGA at your disposal and have to build the card with generic 7400 logic and PALs.)

No, you've been excessively harping on how hard it is, and the bulk of your evidence seems to be a Byte article that came out the same year as the first Mac using the bus, as if nothing has been learned since then. To be clear, I'm not pretending that writing an FPGA Nubus implementation is going to be a walk in the park, but, seriously, we do have the manual and years worth of tech notes and addendums to work off of.Understood, actually I never suggested it was. I've only been talking about the implementation of that logic, it's been problematic for engineers to do in the past and may be so at present.

So what? Seriously, here you are again making a case that essentially boils down to "anything less than better than the best Apple ever made, ever, is totally a waste of time, and therefore we have to make sure we fully exploit the fastest, highest-frequency, most electrically difficult to exploit expansion port available to us for the first revision!" Again, gotta say it, doesn't seem like a helpful attitude. And more than half your last post consisted of this sort of thing. Approximately 64% of it if my broad strokes pipe through "wc" is correct.NuBus saves but a bit less than 32 lines over a PDS implementation and when introduced in the SE/30 at 16MHz could be likened to running on the wet sand at the shoreline as compared to NuBus at 10MHz running in the dry sand. PDS transitioned...

This idea that this has to be the best, fastest video card ever does not seem to have been a requirement of the OP, nor several other people who chimed in and said it'd be nice just to have an alternative to increasingly hard to find decent NuBus cards that aren't very friendly with modern monitors. Why are you so intent on making WARP SPEED a non-negotiable item on the feature list? You do realize how slow *all* the machines you might target with this are, right? That stupid little SPI video card on a CPLD I tossed out earlier can handle about 25 640x480x256 full frames per second on a 62mhz single bit SPI bus. That's about seven and a half megabytes a second sustained. I don't think there is any 680x0 Mac that can meaningfully do that, full-screen 480p video @25hz. I'm including the 840av in this. Performance is *really* a ridiculous thing to harp on this hard.

I'm very much looking forward to this illustration.You said earlier that I might need to draw a picture of what I've been trying to get across to you and I think it's time to do exactly that for the next chapter. Words so often, if not always fail me. Bunsen suggested much the same years back and said doing it helped immensely.

Last edited by a moderator:

I wander in having read none of the thread:

Are you confusing the fact that many Quadras attatch their video cards directly to the processor in what you could logically think of as a PDS "slot" with the idea that any new development necessarily should be PDS?

It makes more sense to conceive of and develop a modern graphics card for these machines as NuBus first in order to meet the needs of the largest number of machines, especially any number of machines that don't have onboard graphics at all, such as the II, IIx, IIcx, and IIfx, or any random NuBus Mac from the II all the way through to the 8100 where the ability to use a newer HDMI based display would be an advantage to someone who doesn't already have Apple monitors laying around.

This shouldn't be turned into an attempt to build an upgrade for the SE/30, nor should it, strictly speaking, be an attempt to build something "fast" (at least not at first.). That's not what was asked for.

Are you confusing the fact that many Quadras attatch their video cards directly to the processor in what you could logically think of as a PDS "slot" with the idea that any new development necessarily should be PDS?

It makes more sense to conceive of and develop a modern graphics card for these machines as NuBus first in order to meet the needs of the largest number of machines, especially any number of machines that don't have onboard graphics at all, such as the II, IIx, IIcx, and IIfx, or any random NuBus Mac from the II all the way through to the 8100 where the ability to use a newer HDMI based display would be an advantage to someone who doesn't already have Apple monitors laying around.

This shouldn't be turned into an attempt to build an upgrade for the SE/30, nor should it, strictly speaking, be an attempt to build something "fast" (at least not at first.). That's not what was asked for.

dlv

Active member

Hi all - I'm sorry I've been remiss posting updates.

At this point, I have the FPGA, its JTAG programmer, and development environment (on my Windows 10 machine) all working. I'm able to upload and write bitstreams, get LEDs to turn on with the push of the a button, etc. All the basics I had originally set out to do. I've been reading FPGA tutorials and looking through the VA2000 code, especially to understand how the author implemented the state machine for the Zorrow II/III bus.

Documentation-wise I've read through (mostly IIfx-relevant parts of the) 68030 PDS discussion in DCaDftMF3e. It was enlightening but it appears I need to go back and understand parts of the NuBus documentation because the PDS documentation largely focused on the physical and electrical design guidelines. I also continue to pour over technical specifications for various components on the FPGA dev board, the FPGA itself, HDMI/TMDS, etc.

I've also started a draft of the schematic for the FPGA carrier board. Gorgonops is correct that interfacing the FPGA with HDMI and SDRAM/DDR memory is a solved problem (indeed, many decisions I've already made have been in the hope of recycling as much of the VA2000 project as possible). Getting it electrically hooked up to a NuBus/PDS bus is a matter of a handful of some level converters. The FPGA can drive HDMI signals directly. And so the carrier board will essentially be an adapter. This also means the meat of this project is going to be in the FPGA code, specifically interfacing between NuBus/PDS (DeclROM, pseudoslot addressing, etc) and the FPGA modules driving HDMI and SDRAM.

Where I left off was pin I/O management: The FPGA dev board makes available 108 I/O pins (the FPGA itself has a lot more, some of which are used to connect to the onboard SDRAM and SPI, etc). The (IIfx) 68030 PDS slot has a 120 pin connector of which it appears 24 pins do not need to be connected (because they're tied to power, ground, reserved, etc) to the FPGA, which leaves 96 pins. HDMI appears to require (from the VA2000 schematic) two pins each (a differential pair) for DATA0, DATA1, DATA2, and CLK and another two pins for SCL and SCA (for EDID). So it's tight - we're just under, at 106 I/O pins - but we also need pins to drive the direction of the level shifters, which is where things get tricky: What signals are unidirectional? Bidirectional? When? Which can be grouped together? I believe I need to revisit the MC68030 documentation for answers. If we need more than 108 I/O pins, are there PDS pins whose signals we do not need? Perhaps it would be good to go back to a NuBus design?

My goal now is developing this carrier board because that appears to be the requisite before anything interesting can happen. Since I don't have the time, resources, and experiences to make PCBs at home, I plan to submit the design to a fab. But that means less room for experimenting / need to get things right the first time. Once I have a carrier board in hand, some good first experiments would be outputting something to HDMI, or as Gorgonops suggested, writing to a register and blinking LEDs on the FPGA dev board. Once those kinds of experiments are working, I think further development will be rapid since it's a matter of hooking together the existing FPGA modules.

I found from the "Macintosh Quadra 900 Developer Notes" document that the 68040 PDS connector on the motherboard is a KEL 8817-140-170SH. The PDS card connector is a KEL 8807-140-170LH. From what I've seen, these connectors are difficult to source except at wholesale quantities. I've resigned to the fact that a PDS video card for my Quadra 950 is unlikely, at least for a first iteration.

Note: I've been targeting the IIfx because that's the only 68030 Mac I would be interested in collecting, but an IIsi might be cheaper for this project (and less of a big deal if I break it in the process)... If anyone has either of these available, please speak with me.

At this point, I have the FPGA, its JTAG programmer, and development environment (on my Windows 10 machine) all working. I'm able to upload and write bitstreams, get LEDs to turn on with the push of the a button, etc. All the basics I had originally set out to do. I've been reading FPGA tutorials and looking through the VA2000 code, especially to understand how the author implemented the state machine for the Zorrow II/III bus.

Documentation-wise I've read through (mostly IIfx-relevant parts of the) 68030 PDS discussion in DCaDftMF3e. It was enlightening but it appears I need to go back and understand parts of the NuBus documentation because the PDS documentation largely focused on the physical and electrical design guidelines. I also continue to pour over technical specifications for various components on the FPGA dev board, the FPGA itself, HDMI/TMDS, etc.

I've also started a draft of the schematic for the FPGA carrier board. Gorgonops is correct that interfacing the FPGA with HDMI and SDRAM/DDR memory is a solved problem (indeed, many decisions I've already made have been in the hope of recycling as much of the VA2000 project as possible). Getting it electrically hooked up to a NuBus/PDS bus is a matter of a handful of some level converters. The FPGA can drive HDMI signals directly. And so the carrier board will essentially be an adapter. This also means the meat of this project is going to be in the FPGA code, specifically interfacing between NuBus/PDS (DeclROM, pseudoslot addressing, etc) and the FPGA modules driving HDMI and SDRAM.

Where I left off was pin I/O management: The FPGA dev board makes available 108 I/O pins (the FPGA itself has a lot more, some of which are used to connect to the onboard SDRAM and SPI, etc). The (IIfx) 68030 PDS slot has a 120 pin connector of which it appears 24 pins do not need to be connected (because they're tied to power, ground, reserved, etc) to the FPGA, which leaves 96 pins. HDMI appears to require (from the VA2000 schematic) two pins each (a differential pair) for DATA0, DATA1, DATA2, and CLK and another two pins for SCL and SCA (for EDID). So it's tight - we're just under, at 106 I/O pins - but we also need pins to drive the direction of the level shifters, which is where things get tricky: What signals are unidirectional? Bidirectional? When? Which can be grouped together? I believe I need to revisit the MC68030 documentation for answers. If we need more than 108 I/O pins, are there PDS pins whose signals we do not need? Perhaps it would be good to go back to a NuBus design?

My goal now is developing this carrier board because that appears to be the requisite before anything interesting can happen. Since I don't have the time, resources, and experiences to make PCBs at home, I plan to submit the design to a fab. But that means less room for experimenting / need to get things right the first time. Once I have a carrier board in hand, some good first experiments would be outputting something to HDMI, or as Gorgonops suggested, writing to a register and blinking LEDs on the FPGA dev board. Once those kinds of experiments are working, I think further development will be rapid since it's a matter of hooking together the existing FPGA modules.

I found from the "Macintosh Quadra 900 Developer Notes" document that the 68040 PDS connector on the motherboard is a KEL 8817-140-170SH. The PDS card connector is a KEL 8807-140-170LH. From what I've seen, these connectors are difficult to source except at wholesale quantities. I've resigned to the fact that a PDS video card for my Quadra 950 is unlikely, at least for a first iteration.

Note: I've been targeting the IIfx because that's the only 68030 Mac I would be interested in collecting, but an IIsi might be cheaper for this project (and less of a big deal if I break it in the process)... If anyone has either of these available, please speak with me.

Last edited by a moderator:

Trash80toHP_Mini

NIGHT STALKER

Thank you! Update much appreciated.My goal now is developing this carrier board because that appears to be the requisite before anything interesting can happen. Once I have a carrier board in hand, some good first experiments would be outputting something to HDMI, or as Gorgonops suggested, writing to a register and blinking LEDs on the FPGA dev board.

I found from the "Macintosh Quadra 900 Developer Notes" document that the 68040 PDS connector on the motherboard is a KEL 8817-140-170SH. The PDS card connector is a KEL 8807-140-170LH. From what I've seen, these connectors are difficult to source except at wholesale quantities. I've resigned to the fact that a PDS video card for my Quadra 950 is unlikely, at least for a first iteration.

Keeping that PDS connector in your Q950 in mind was all but the whole of what I was trying to say. Very glad to hear you're interested in that with NuBus as the first course. The IIfx is far more interesting/accessible in many ways than Q950 and the IIsi is definitely the way to go for a first run at PDS, that's why I suggested it early on evenif as a retrofit for a successful NuBus card in the first go 'round. So we're talking about exactly the same three machines in terms of a run at PDS. For a second iteration of the project if and when, those machines would be a lot of fun.

Cory, I'm not suggesting in any way "that any new development necessarily should be PDS?" I originally mentioned SE/30 in terms of development because so many here are familiar with developing for it. I used it for the I/O speed comparisons above, but not as a direct target machine, that would be an offshoot of the IIsi/IIfx line of development. No way am I suggesting we need turn this into an SE/30 card development.

G, lest I forget:

Yep, we've been over that "except when" gotcha already in-thread when I made that ill-fated, ill conceived suggestion off the top of my head. Point taken and "bus lane" nomenclature correction noted.You didn't seem aware of things like there actually isn't such a thing as "16 bit mode" for Nubus, which is something that comes to light pretty quickly when you read the manuals. (Yes, there's the "bus lane" thing, but that doesn't apply to any "RAM-like" device like a video card *except* for the declaration ROM. They're *very* clear about that.) I get that you have it really drilled into your head that the two kinds of cards "look alike" if you use the "Psuedoslot" methodology, and, sure, that's great when you're talking about what you're sticking on the declaration ROM, but that cart is so far in front of this horse it's not even funny.

My thinking all along has been that we were still talking about using a pair of FPGAs per your earlier suggestion up until you made your recent suggestion of doing everything on a single FPGA on NuBus, sans external memory for a Black & White test card. I'll stop with PDS commentary until the proper time or after I've sorted out what I have been unsuccessfully trying to say in set of the various block diagrams dancing around in my head. :blink:

BTW, the pad pattern of the 040 PDS connector bears a remarkable resemblance to a tighter pitch PCI type edge card connector slot. If a compatible slot connector should be available, my Quadra 950 board is at your disposal for retrofit and experimentation, dlv. That higher count 040 pin pattern, like the PCI connector, also fits within the 10cm x 10cm SEEED square. But then the choice would be between a SATA SSD card and VidCard development for the Q950, hrmmm?

This is one of the major reasons why I've grown so cagey and irascible about the contention that PDS is "easier"; the documentation at least in the three versions of DCaDftMF3e I've spent much time poking through seem to largely hand off documenting the 680x0 bus protocols to the original Motorola manuals. (IE, to design a PDS card you need to fuse the physical/electrical design guidelines from the Apple manual with the protocol information from the Motorola documentation.) That was probably fine back in 1990 because there was a good chance anyone working in the field was at least noddingly familiar with the Motorola busses, but today, maybe not so much? Maybe this is a foolishly optimistic statement, but in that light it seems like NuBus *may* at least have the advantage of the Apple-specific documentation being more complete and comprehensive for this *specific* application.It was enlightening but it appears I need to go back and understand parts of the NuBus documentation because the PDS documentation largely focused on the physical and electrical design guidelines.

(Perhaps I'm just too stupid to feel like raw 680x0 is easier because I've only had direct experience poking with simpler busses like 6502 and Z-80, so 680x0 looks pretty complicated by comparison, while at the same time I'm not super intimidated by the fact that NuBus has "protocol" because signal processing is what FPGAs are really good at and what looked like a lot of overhead in terms of discrete registers and buffers in 1987 is absolutely nothing for them.)

In any case, I'm glad you're still working on the project. It'd gone all quiet there, I was really worried that the rumors of impossibility had scared you off.

dlv

Active member

Well, if only because these machines are well documented in documentation accessible to me. I suspect the number of available I/O pins on my FPGA board is likely to dictate the decision between NuBus and PDS. State machines appear to be natural to implement in a FPGA so the prospect of implementing NuBus isn't as daunting as it once was, although by no means do I expect it to be trivial. I know you had some concerns about the speed of NuBus, which I share, but that seems like a later concern compared to getting it to display anything.Keeping that PDS connector in your Q950 in mind was all but the whole of what I was trying to say. Very glad to hear you're interested in that with NuBus as the first course. The IIfx is far more interesting/accessible in many ways than Q950 and the IIsi is definitely the way to go for a first run at PDS, that's why I suggested it early on evenif as a retrofit for a successful NuBus card in the first go 'round. So we're talking about exactly the same three machines in terms of a run at PDS. For a second iteration of the project if and when, those machines would be a lot of fun.

Thanks for the offer. Alas, a 68040 PDS card is extremely unlikely, not only because the connector is challenging to source but because the additional I/O lines would put it beyond the number of I/O pins available on all FPGA development boards I've seen. It would still be possible to press forward but would require designing the entire card from scratch (perhaps copying portions of the design from an FPGA development board which is what the VA2000 project did from the miniSpartan6+), and then soldering the FPGA's BGA package and other components onto it. But that's well beyond what can be done (reliably) at home, so it would be necessary to reach out to a fab to not only manufacture the PCB but assemble and place components too... That only makes sense at scale, which requires capital. In that case, it would likely have been realized as a NuBus design in the first place, since it would then work in the greatest number of machines.BTW, the pad pattern of the 040 PDS connector bears a remarkable resemblance to a tighter pitch PCI type edge card connector slot. If a compatible slot connector should be available, my Quadra 950 board is at your disposal for retrofit and experimentation, dlv. That higher count 040 pin pattern, like the PCI connector, also fits within the 10cm x 10cm SEEED square. But then the choice would be between a SATA SSD card and VidCard development for the Q950, hrmmm?

But it's fun to dream

Last edited by a moderator:

Trash80toHP_Mini

NIGHT STALKER

First of, my butt is now firmly planted on a seat in the bus to NuBus,. That said, one last clarification.

I wonder if this might be harvested somewhere:

@dlv If 'net spelunking turns up that utility, I've got Mac II era Vidcards from Apple a SuperMac card available if needs be.

That's exactly what I'd said on the previous page after describing those early difficulties: "That said, there have been two revised editions of the 1987 DCaDftMIIaMSE they were working from, so it might be a bit more straightforward at this point?" We're maybe a bit more on the same page than you think, G. Maybe I'm just way too far off on the margin . . . :blink:No, you've been excessively harping on how hard it is, and the bulk of your evidence seems to be a Byte article that came out the same year as the first Mac using the bus, as if nothing has been learned since then. To be clear, I'm not pretending that writing an FPGA Nubus implementation is going to be a walk in the park, but, seriously, we do have the manual and years worth of tech notes and addendums to work off of.

I wonder if this might be harvested somewhere:

Dunno if it would help, but might be worth taking a stroll three decades past?Editor's note: The source code and executable code for the NuBus Monitor described in this article are available in a variety of formats. See page 3 for details. They are also available on the Thousand Oaks Technical Database, (805) 492-5472 and (805) 493-1495. The NTC schematic and PAL listings are also available on BIX.

P.3 Exploring with the NuBus Monitor

Our NuBus Monitor allows you to copy, display, fill, and substitute memory while the NuBus is in the 32-bit or 24-bit mode. While we wrote it to access the Apple NuBus Test Card and the co-processor boards that we were developing, the NuBus Monitor is a convenient way to explore the NuBus address space of the Mac II. The tricks we had to use writing this Monitor are the same as those a developer will need to master to get a driver functioning in the Mac II NuBus environment. It is written in Lightspeed C 2.15.

When first invoked, the NuBus Monitor searches for the Mac Irs video board. The Monitor starts in the 32-bit mode and looks for a video board occupying one of the slots. It can detect either an Apple Mac II video board or a SuperMac, video board by the signature of their configuration ROM. The video board is usually located in slot 9 (the leftmost slot, looking from the front of the machine), although this is not a requirement. From this NuBus slot, the video board appears at address F900 OOOOh in the 32-bit mode. You should see the prompt McCray ( f9000000) >.

The displayed address will vary depending on which slot the video board is placed in. This address points to the base address of the video board, so other Monitor commands can be issued using only the lower part of the full slot address. If the NuBus Monitor fails to detect the presence of a video board other than the two described, you can enter the address offset manually by issuing the command 0 fNOOOOOO. This sets the Nu-Bus Monitor's operational address offset' to slot n. Use the offset number n (9h to Eh) appropriate for your video board location.

@dlv If 'net spelunking turns up that utility, I've got Mac II era Vidcards from Apple a SuperMac card available if needs be.

Last edited by a moderator:

dlv

Active member

That's exactly the same conclusion I have come to regarding a PDS design vs NuBus design. I read through a good chunk of the Motorola 68030 manual two years ago to try to understand what would be involved with creating a quad-processor homebrew 68030 computer (entirely too ambitious), but it has been long enough that I would need to revisit it.This is one of the major reasons why I've grown so cagey and irascible about the contention that PDS is "easier"; the documentation at least in the three versions of DCaDftMF3e I've spent much time poking through seem to largely hand off documenting the 680x0 bus protocols to the original Motorola manuals. (IE, to design a PDS card you need to fuse the physical/electrical design guidelines from the Apple manual with the protocol information from the Motorola documentation.) That was probably fine back in 1990 because there was a good chance anyone working in the field was at least noddingly familiar with the Motorola busses, but today, maybe not so much? Maybe this is a foolishly optimistic statement, but in that light it seems like NuBus *may* at least have the advantage of the Apple-specific documentation being more complete and comprehensive for this *specific* application.

I don't expect PDS will be "easier" but in a way, it's "better understood" (modulo Apple-specific stuff) because at that level, you can discuss general computer architecture design and techniques.

I think this project is actually feasible, given that the VA2000 and similar open-source projects have led the way and done the bulk of the work. As you identified, the main challenge is the PDS/NuBus interface and hooking everything up. I expect large portions to be borrowed wholesale from the VA2000 project.In any case, I'm glad you're still working on the project. It'd gone all quiet there, I was really worried that the rumors of impossibility had scared you off.

That said, I have a professional career and life, so progress will be sporadic

Last edited by a moderator:

Yeah, I think if the bus glue, whichever bus it is, can be sorted out you're 95% there.the main challenge is the PDS/NuBUs interface and hooking everything up

Getting the build environment together to build the Declaration ROMs might be fun. I've skimmed through the "Toby" description in the Rev. 3 design book again and spent a little time sifting through the assembly language driver in the appendix, and I'm not terribly ashamed to admit there are some things in there I haven't come close to wrapping my head around. For instance, the framebuffer RAM mapping is pretty straightforward, but the documentation also refers to the "control space" assigned to the card for the hardware registers (IE, CLUT memory, video mode configuration registers, etc), exactly where that space is mapped into (IE, where it *should* be mapped, in both 32 and 24 bit modes) the slot space is... not super clear if you're just skimming, I guess. It also sort of amusing to me that the driver code listing has passages like this in it:

[SIZE=9pt]Hardware [/SIZE][SIZE=10pt]implementations vary greatly here. Usually, based [/SIZE][SIZE=9pt]on [/SIZE][SIZE=10pt]the [/SIZE][SIZE=11pt]csStart [/SIZE][SIZE=10pt]parameter, the code [/SIZE][SIZE=11pt]will [/SIZE][SIZE=10pt]separately implement sequential and indexed [/SIZE][SIZE=9pt]CLUT [/SIZE][SIZE=10pt]writes. [/SIZE][SIZE=11pt]If [/SIZE][SIZE=10pt]these routines use substantial stack space, they should be careful to check [/SIZE][SIZE=11pt]that this [/SIZE][SIZE=9pt]amount [/SIZE][SIZE=10pt]of space [/SIZE][SIZE=11pt]is [/SIZE][SIZE=10pt]available. [/SIZE]

[SIZE=10pt]Bsr [/SIZE][SIZE=9pt]HWWriteCLUT [/SIZE][SIZE=11pt]left [/SIZE][SIZE=10pt]as an exercise for the reader [/SIZE]

IE, they've left out some of the hardware specific code and left it for you to figure out, so it's not really a *complete* example.

Nothing deal breaking here, and you have some real advantages with an FPGA design like, for instance, you can "hardcode" all the video modes you want to support into the FPGA so all you need to do is map a single register you throw a mode number at, instead of having to implement on the Mac side the software to program a general purpose "CRTC" analog. But it definitely would probably help to see about drafting someone who's written a Mac driver before.

Last edited by a moderator:

Sure, but the context in which you brought it up really came across as you saying "See, NuBus is so hard these guys couldn't figure it out even with an Apple engineer's help!" before yet again launching into more extraneous reasons why PDS has to come first and you're wasting your time anyway with NuBus because 10mhz slow overcomplicated kludge local bus better... etc.That's exactly what I'd said on the previous page after describing those early difficulties: "That said, there have been two revised editions of the 1987 DCaDftMIIaMSE they were working from, so it might be a bit more straightforward at this point?" We're maybe a bit more on the same page than you think, G. Maybe I'm just way too far off on the margin . . .

Anyway, if you're not done re-litigating this whole thing by all means continue?

Last edited by a moderator:

Responding to myself, I know, but I wonder if this is an area where looking at how, say, BasiliskII's video driver works might be useful. BasiliskII might actually be a helpful model here because I don't think it emulates any sort of programmable video hardware, it just throws color change/mode requests at a port implemented as a virtual hardware stub. If we did make the FPGA hide all that complexity the resulting card would kind of look like BasiliskII's emulated video card?But it definitely would probably help to see about drafting someone who's written a Mac driver before.

Last edited by a moderator:

Trash80toHP_Mini

NIGHT STALKER

Never said they didn't figure it out, they did, or that they couldn't do is without help from Apple, they could have. Sounds like it was a PITA even with help to me though. I'll grant you that what I tried to express came across really badly, such is my lot in life when it comes to words. :mellow:Sure, but the context in which you brought it up really came across as you saying "See, NuBus is so hard these guys couldn't figure it out even with an Apple engineer's help!" . . .

And the above is not argumentative? Whatever, no need for further litigation, that was a pretty damn good synopsis and entertaining to boot. :approve: BTW, you got to me again with that "16bit mode" snark. Dunno, whatever saves on I/O lines will do.. . . before yet again launching into more extraneous reasons why PDS has to come first and you're wasting your time anyway with NuBus because 10mhz slow overcomplicated kludge local bus better... etc.

Anyway, if you're not done re-litigating this whole thing by all means continue?

EVERY example in those damn books leaves out part of the picture! One less thing to worry about though, the only iterations we're talking about AFAIK are B&W and 24-bit. CLUT complications are scraped off the plate and straight down the garbage disposal right from the get go! [the framebuffer RAM mapping is pretty straightforward, but the documentation also refers to the "control space" assigned to the card for the hardware registers (IE, CLUT memory, video mode configuration registers, etc), exactly where that space is mapped into (IE, where it *should* be mapped, in both 32 and 24 bit modes) the slot space is... not super clear if you're just skimming, I guess. It also sort of amusing to me that the driver code listing has passages like this in it: . . .

[SIZE=9pt]Hardware [/SIZE][SIZE=10pt]implementations vary greatly here. Usually, based [/SIZE][SIZE=9pt]on [/SIZE][SIZE=10pt]the [/SIZE][SIZE=11pt]csStart [/SIZE][SIZE=10pt]parameter, the code [/SIZE][SIZE=11pt]will [/SIZE][SIZE=10pt]separately implement sequential and indexed [/SIZE][SIZE=9pt]CLUT [/SIZE][SIZE=10pt]writes. [/SIZE][SIZE=11pt]If [/SIZE][SIZE=10pt]these routines use substantial stack space, they should be careful to check [/SIZE][SIZE=11pt]that this [/SIZE][SIZE=9pt]amount [/SIZE][SIZE=10pt]of space [/SIZE][SIZE=11pt]is [/SIZE][SIZE=10pt]available. [/SIZE]

[SIZE=10pt]Bsr [/SIZE][SIZE=9pt]HWWriteCLUT [/SIZE][SIZE=11pt]left [/SIZE][SIZE=10pt]as an exercise for the reader [/SIZE]

IE, they've left out some of the hardware specific code and left it for you to figure out, so it's not really a *complete* example.

*Many* color Macintosh games break in non-indexed modes, it's pretty much a requirement that a general purpose video card support at least 256 color, and 16 color support would be a good idea. (Can probably skip 4 color/grays, a significant number of original Mac cards, possibly a plurality, didn't do it.)the only iterations we're talking about AFAIK are B&W and 24-bit. CLUT complications are scraped off the plate and straight down the garbage disposal right from the get go!

Just supporting B&W *or* true color certainly works as a smoke test, for the multitude of reasons noted before, but there's no dodging this being something that needs to be figured out eventually. I don't think we really *need* the code from some real Apple video card to do that, I'm just disappointed that the full source to some real card isn't in there because the more example code that touches the "control plane" the better.

I do wonder if it's possible the full source for the reference card in the book might be on some developer CD or long-ago mirror of an Apple FTP site, however. It does make more than a little sense that they'd feel the need to condense it to avoid making the manual a bazillion pages long.

Last edited by a moderator:

Trash80toHP_Mini

NIGHT STALKER

iCrap! Forgot about gaming, for that you'll definitely need support for microscopic resolutions (640x480) and limited color palette. My only real interest here is in 720p or whatnot in 24bit on a cheap as 1080p display over HDMI.

For some reason "Beavis and Butthead's Excellent CD" comes to mind . . . well, those were the second two names that came to mind. The others had to do with an excellent adventure or some such nonsense, but not the names on the CD title I think? Wasn't that the first off Developer CD? If so, it might be the first place to look? The CD came up in a reference I found while getting the Source Documentation List lined up, IIRC.

For some reason "Beavis and Butthead's Excellent CD" comes to mind . . . well, those were the second two names that came to mind. The others had to do with an excellent adventure or some such nonsense, but not the names on the CD title I think? Wasn't that the first off Developer CD? If so, it might be the first place to look? The CD came up in a reference I found while getting the Source Documentation List lined up, IIRC.

A fun thing to implement in the video section, depending on how difficult it'd be to pull off, would be rotated display support. Many small LCD displays are available with stands that allow rotating the screen 90 degrees; I have two rotation-capable 17"-ish 1600x900 displays lying around that I think are pretty useless for anything modern in portrait mode because 900 pixels wide just isn't enough, but a 900x1600 portrait would be fantastic for a prehistoric version of PageMaker.

Engineering the address generation scheme to make that transparent would certainly be an interesting challenge...

Engineering the address generation scheme to make that transparent would certainly be an interesting challenge...

dlv

Active member

Oh hmm... I dismissed the need to support CLUTs (my own interest is in true color at 1080p) but that totally makes sense for a general purpose video card.*Many* color Macintosh games break in non-indexed modes, it's pretty much a requirement that a general purpose video card support at least 256 color, and 16 color support would be a good idea. (Can probably skip 4 color/grays, a significant number of original Mac cards, possibly a plurality, didn't do it.)

Just supporting B&W *or* true color certainly works as a smoke test, for the multitude of reasons noted before, but there's no dodging this being something that needs to be figured out eventually.

I recall someone suggested this earlier in the thread. I agree it could be worthwhile for hints and testing assumptions in software. Perhaps even a software implementation.I wonder if this is an area where looking at how, say, BasiliskII's video driver works might be useful. BasiliskII might actually be a helpful model here because I don't think it emulates any sort of programmable video hardware, it just throws color change/mode requests at a port implemented as a virtual hardware stub.

I suspect that would not be technically difficult to implement once everything else has been figured out but I wonder what is required in the software/driver/ROM side to make that option available. I know Apple developed portrait displays, so it must be possible. Another exciting possibility is dual screen support, provided the FPGA has the bandwidth to push that many pixels. In any case, we're kind of getting ahead of ourselves... Baby steps.A fun thing to implement in the video section, depending on how difficult it'd be to pull off, would be rotated display support.

It's clear I need to do a more thorough reading of the NuBus documentation.

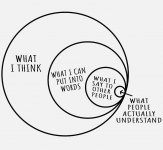

Regarding the challenge of communication... This is one of my favorite diagrams. We need to be patient with each other

Last edited by a moderator:

It's probably just top of mind for me because I occasionally play "Lemmings" with my kid on a PowerBook G4 under Classic, and that game falls into that category. (OS X's desktop looks *amazingly* bad in 256 color mode...) I'm sure the problem applies to more than games, so far as it goes; True-Color video cards were pretty rare until the 1990's and a lot of early color software was notorious about making assumptions.Oh hmm... I dismissed the need to support CLUTs (my own interest is in true color at 1080p) but that totally makes sense for a general purpose video card.

Baby steps, totally. I just figured since so many other crazy endgame wish items had been thrown out I'd add one.I suspect that would not be technically difficult to implement once everything else has been figured out but I wonder what is required in the software/driver/ROM side to make that option available. I know Apple developed portrait displays, so it must be possible. Another exciting possibility is dual screen support, provided the FPGA has the bandwidth to push that many pixels. In any case, we're kind of getting ahead of ourselves... Baby steps.

What makes this an interesting challenge is today's "portrait displays" are different from the ones of old. The ones for the Macintosh (including devices like the "Pivot" displays that were dual-mode) had horizontal rasters in portrait mode, IE, they still look like normal 4x3 monitors, just taller. The way rotation is handled today is the monitor is still scanning the "normal" way (which would mean if it had a proper raster it would be scanning vertically) and the video card does the rotation. On most modern video cards I think that's handled by the GPU treating the output as if it were a rotated texture. Obviously a solution like that isn't going to work.

I'm thinking it wouldn't be too hard to do it just by restricting portrait modes to 8 bit color (or gray) or less so it's always a byte per pixel and just programming your display memory address generation to do the necessary crazy-stride through RAM on the output side. The reason for restricting to 8-bit or less in my mind is because once you go beyond that your RAM bandwidth requirements get sort of intimidating. (if you have 16 bit wide RAM like the FPGA board has then 32 bit color in portrait needs twice as many reads per pixel as true color landscape.)

But, yea, this is a "someday" thought.